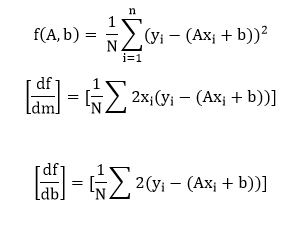

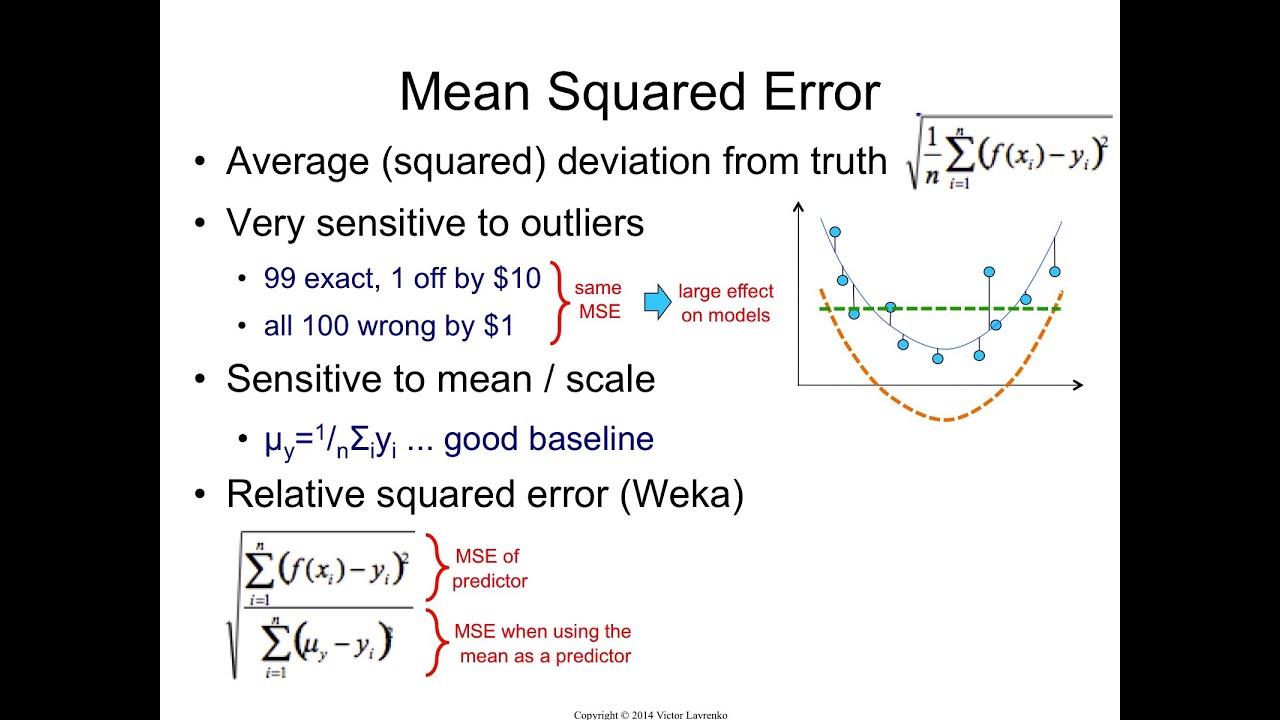

However, squares are not the only option! In the next section, we will tell you, among other things, about MAE, which uses absolute values instead of squares to achieve exactly the same effect - get rid of negative signs of differences.Suppose that we would like to estimate the value of an unobserved random variable $X$ given that we have observed $Y=y$. This, however, nearly never happens in practice: MSE is almost always strictly positive because there's almost always some noise ( randomness) in the observed values.Īs you can see, we really can't take simple differences. It’s easier to take derivatives of sums than products. In particular, if the predicted values coincided perfectly with observed values, then MSE would be zero. It’s far better for floating-point arithmetic (you don’t want to lose precision by multiplying a bunch of small numbers together). Log probabilities can be converted into regular numbers for ease of computation using a softmax function. Thanks to squaring, we can say that that the smaller the value of MSE, the better model. They both measure the difference between an actual probability and predicted probability, but cross entropy uses log probabilities while cross-entropy loss uses negative log probabilities (which are then multiplied by -log (p)). The MSE metric measures the average of the squares of the errors, that is the average. In other words, squaring makes both positive and negative differences contribute to the final value in the same way. In contrast, when we take a square of each difference, we get a positive number, and each individual error increases the sum.

The loss is the mean overseen data of the squared differences between true and. This could lead us to a false conclusion that our prediction is accurate since the error is low. Mean squared error (MSE) is the most commonly used loss function for regression. As a result, we can get the sum close to (or even equal to) zero even though the terms were relatively large. After computing the squared distance between the inputs, the mean value over the last dimension. And when we add together positive and negative differences, individual errors may cancel each other out. Computes the mean squared error between labels and predictions. As science becomes increasingly cross-disciplinary and scientific models become increasingly cross-coupled, standardized practices of model.USGS Organization: Illinois Water Science Ce. So far, we have only used the functions provided by the basic installation of the R programming language.

Example 3: Calculate MSE Using mse() Function of Metrics Package.

The goal of a signal fidelity measure is to compare two signals. The result is exactly the same as in Example 1. To calculate MSE in MATLAB, we can use the mse (X, Y. WHAT IS THE MSE We begin with a discussion of the MSE as a signal fidelity measure.

The lower the value for MSE, the better a model is able to forecast values accurately. Namely, the predicted values can be greater than or less than the observed values. It is calculated as: MSE (1/n) (actual forecast)2. No, there are good reasons for taking the squares! Wouldn't it be simpler and more intuitive to add the differences between actual data and predictions without squaring them first?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed